Assessment Design:

Building High Rigor Analytical Item Banks

This high-rigor assessment system measures analytical reasoning and evidence-based decision-making. The project transformed complex source materials into performance-aligned assessments tied to measurable skill development and state standards.

Audience: Secondary learners in grades 9-10 in English classes preparing for high-stakes, standards-aligned analytical assessments such as the English 2 EOC.

Responsibilities: Instructional Design, Learning Strategy, Item Writing, Distractor Engineering, Data Analysis

Tools Used: Grades 9-10 NC Standard Course of Study for English Language Arts (2017), Google Docs, Google Sheets, Formative, ChatGPT, Claude

Note: This assessment suite was developed and implemented for high-rigor high school English courses. Performance data reflects aggregated classroom outcomes from 2023–2026. Student information has been removed or anonymized to protect privacy.

Focus Impact

Proficiency improved 65% → 78% after assessment redesign

Written justification reduced guessing and increased analytical reasoning

Students exceeded state growth projections by +16 points on average

91–93% of students outperformed modeled expectations

The Problem

Learners preparing for high-stakes assessments showed familiarity with content but struggled to apply higher-order reasoning during testing.

Existing practice activities emphasized recall and basic comprehension rather than the level of analytical thinking required by standards-aligned assessments. As a result, performance data was inconsistent and it was difficult to diagnose skill gaps.

To address this gap, I designed a structured assessment system aligned with North Carolina Common Core Standards (Grades 9–10 ELA).

The Solution

All assessments were originally made into a paper form, which students preferred, but this led to a delay in feedback. I later utilized a hybrid paper-digital format.

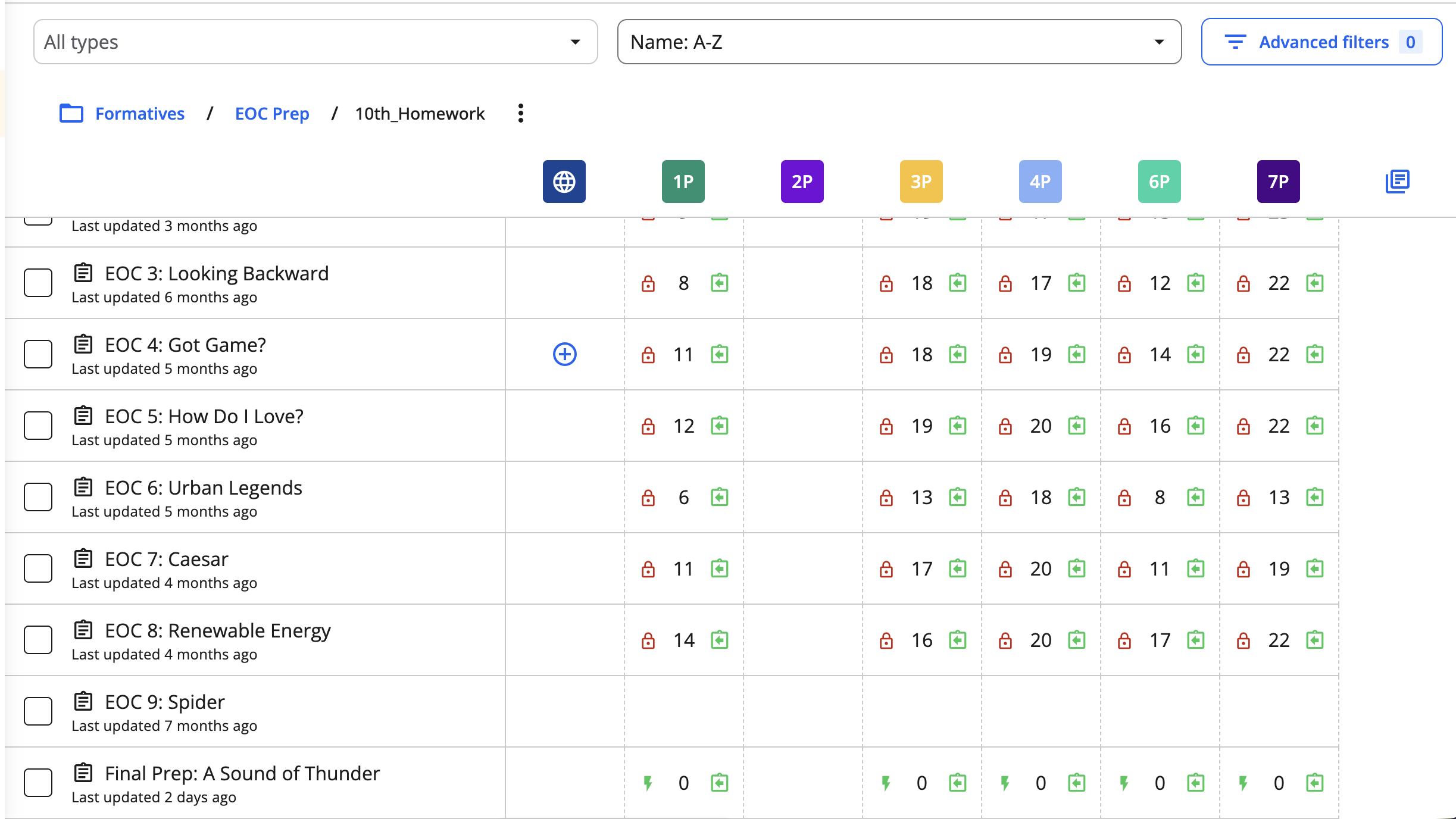

When using the digital form of the assessment, Formative, by NewsELA, was my assessment platform of choice due to its ability to track and manage data, even on a free account.

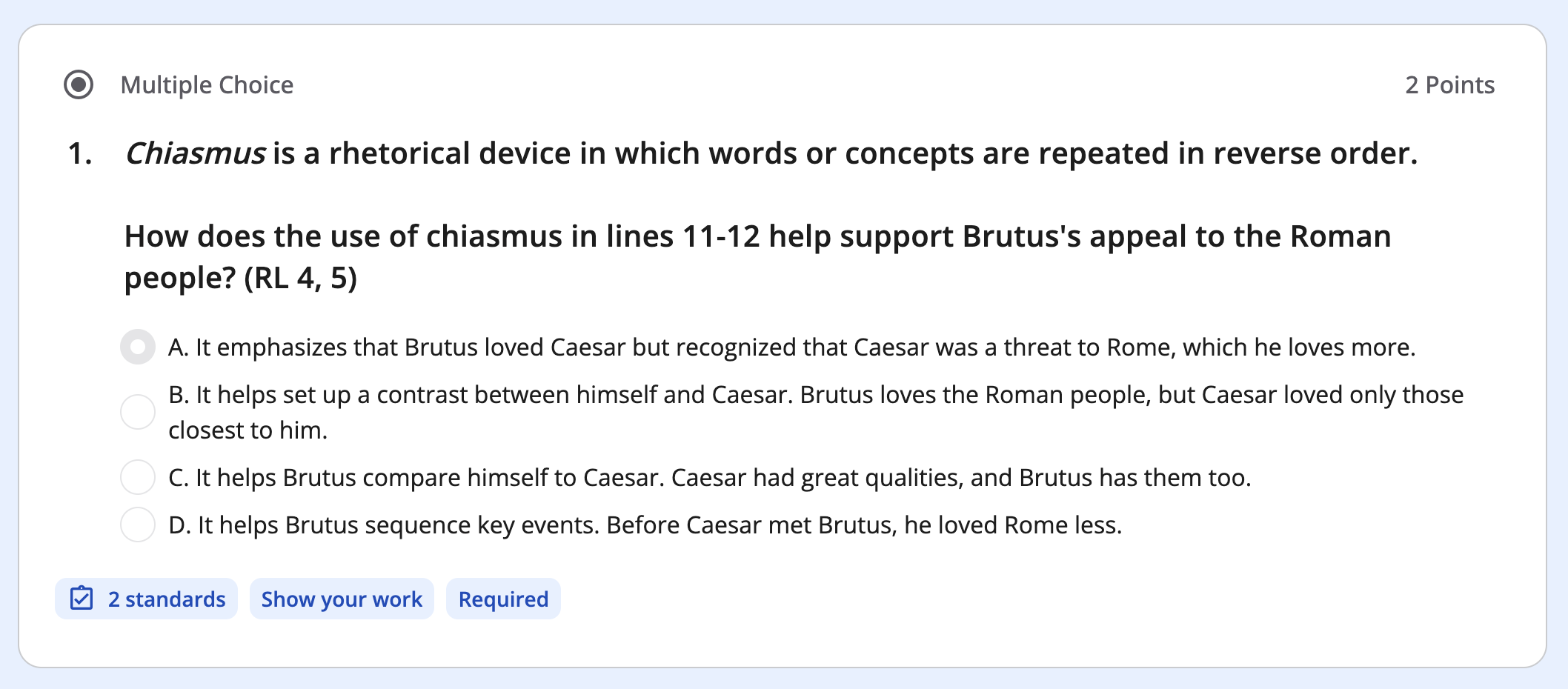

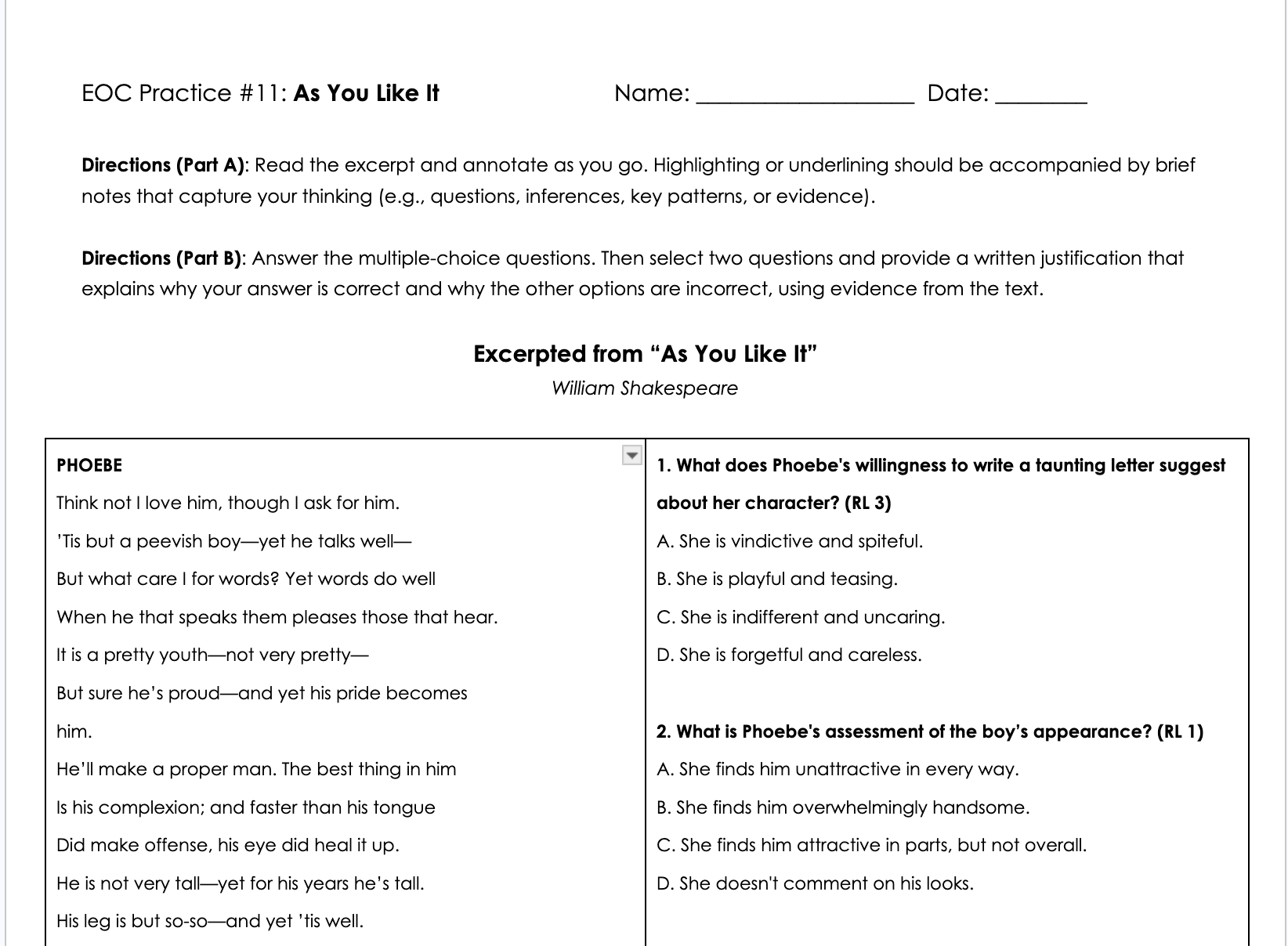

Each assessment began with clearly defined performance objectives. Complex source materials were selected to replicate authentic testing conditions, and multiple-choice items were designed to measure higher-order skills such as inference, synthesis, and evidence evaluation. Distractors were intentionally built around common learner misconceptions to increase diagnostic value.

In 2024–2025, I introduced a written justification component requiring learners to explain why the correct answer was valid and why distractors were incorrect. This shifted assessments from passive answer selection to active analytical reasoning and metacognitive reflection.

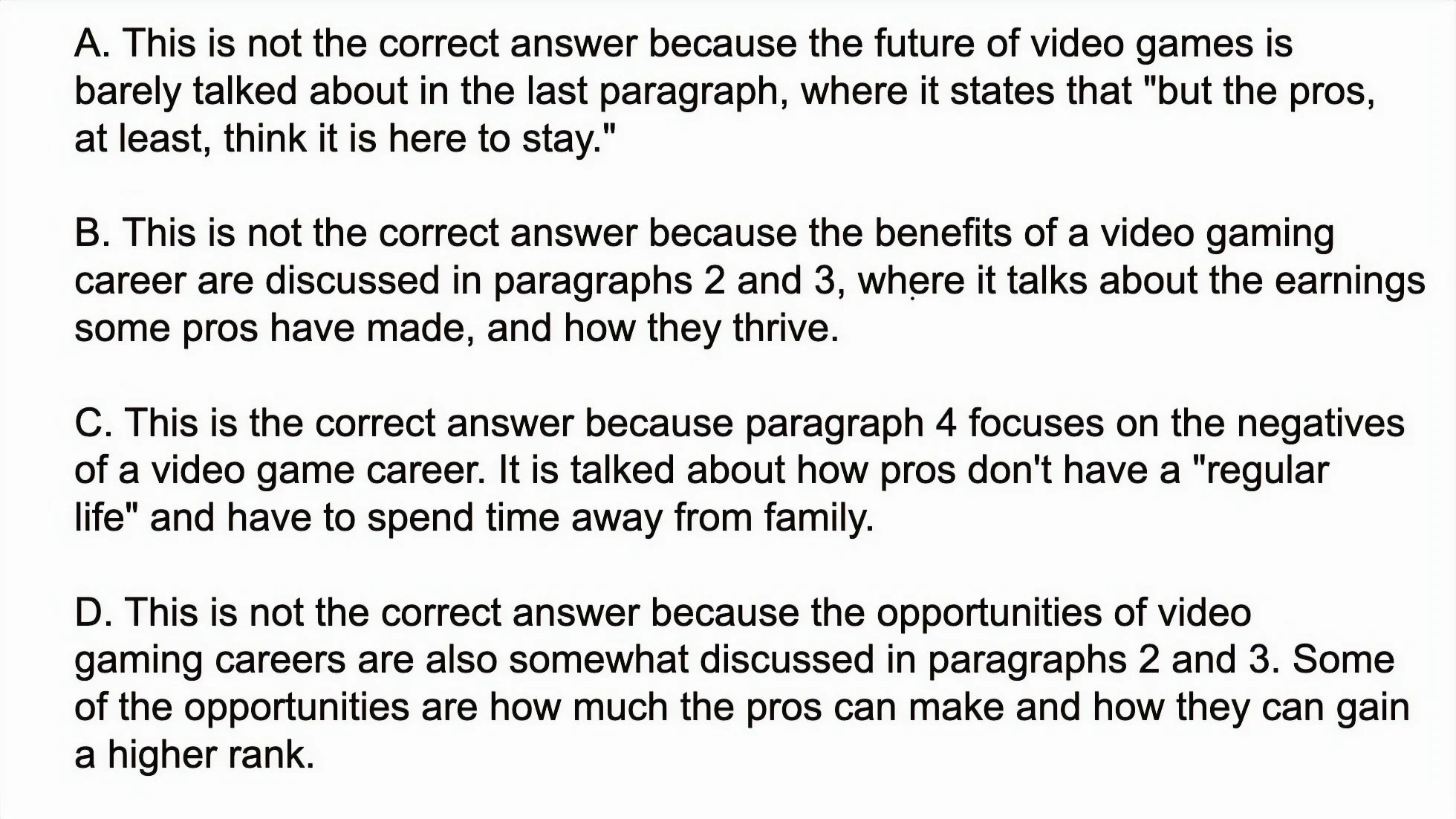

A sample of a written justification provided by a 10th grade honors student on a nonfiction piece about video gaming as a job possibility.

Assessments were continuously refined using performance data and learner feedback. Item wording, distractor quality, and test structure were iteratively improved to maintain appropriate cognitive rigor while balancing task duration and cognitive load.

Design Challenges

A key challenge was balancing analytical rigor with practical classroom constraints.

Learners preferred printed assessments because annotation supports deeper text analysis. However, paper-based administration significantly slowed grading, often delaying feedback by four days and limiting opportunities for timely correction.

To address this, I implemented a hybrid assessment model. Multiple-choice responses were submitted digitally through Formative for immediate scoring, while written justifications were evaluated manually. This reduced feedback turnaround to 24–48 hours, enabling learners to complete corrections during scheduled study periods.

Platform limitations created additional challenges. School-provided systems lacked long-term data aggregation, and Formative stored detailed analytics for only two weeks. To maintain longitudinal performance tracking, I developed a manual data extraction workflow supported by a custom spreadsheet system.

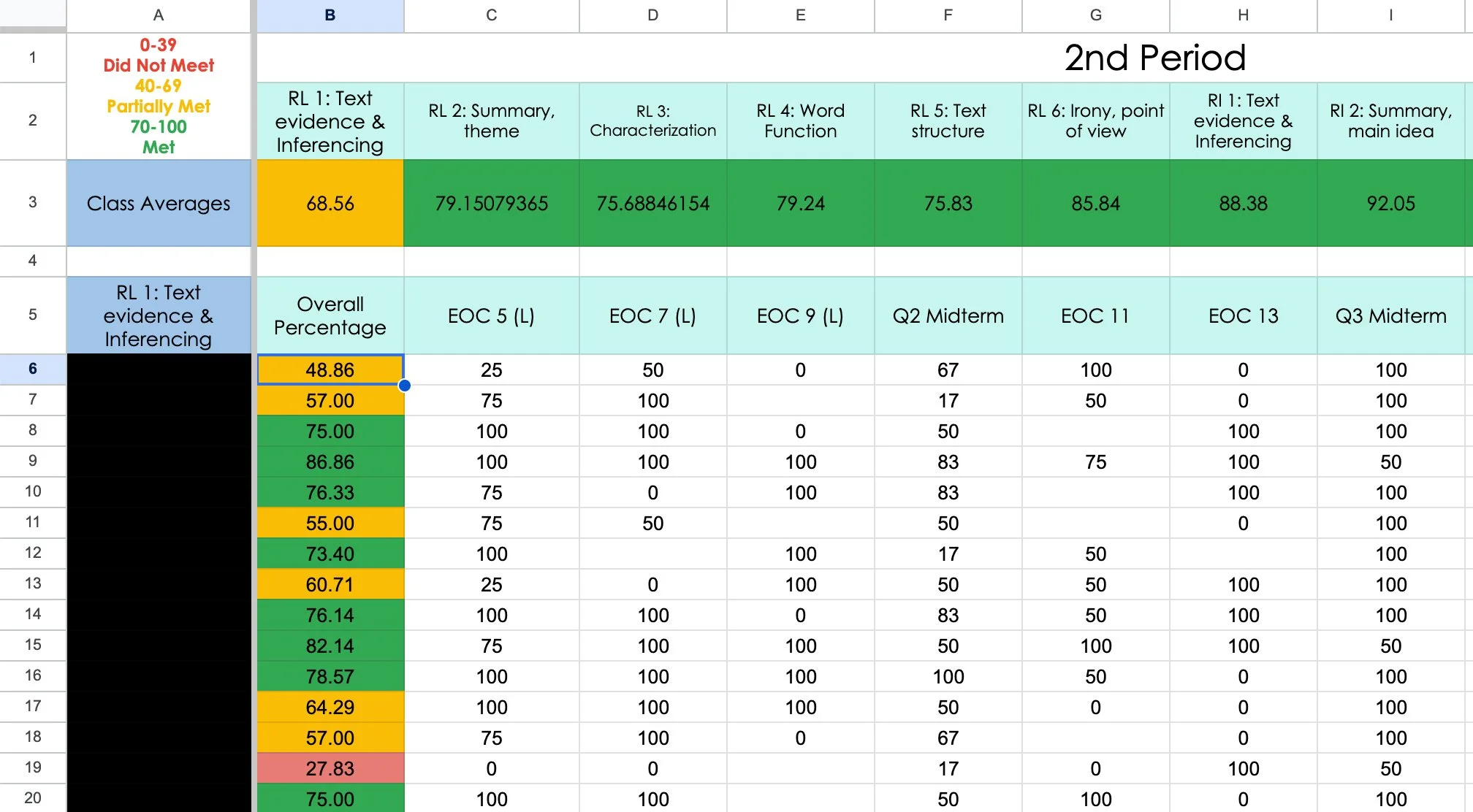

A sample of a data aggregation spreadsheet for individual students' proficiency performance on specific Common Core standards within the assessments.

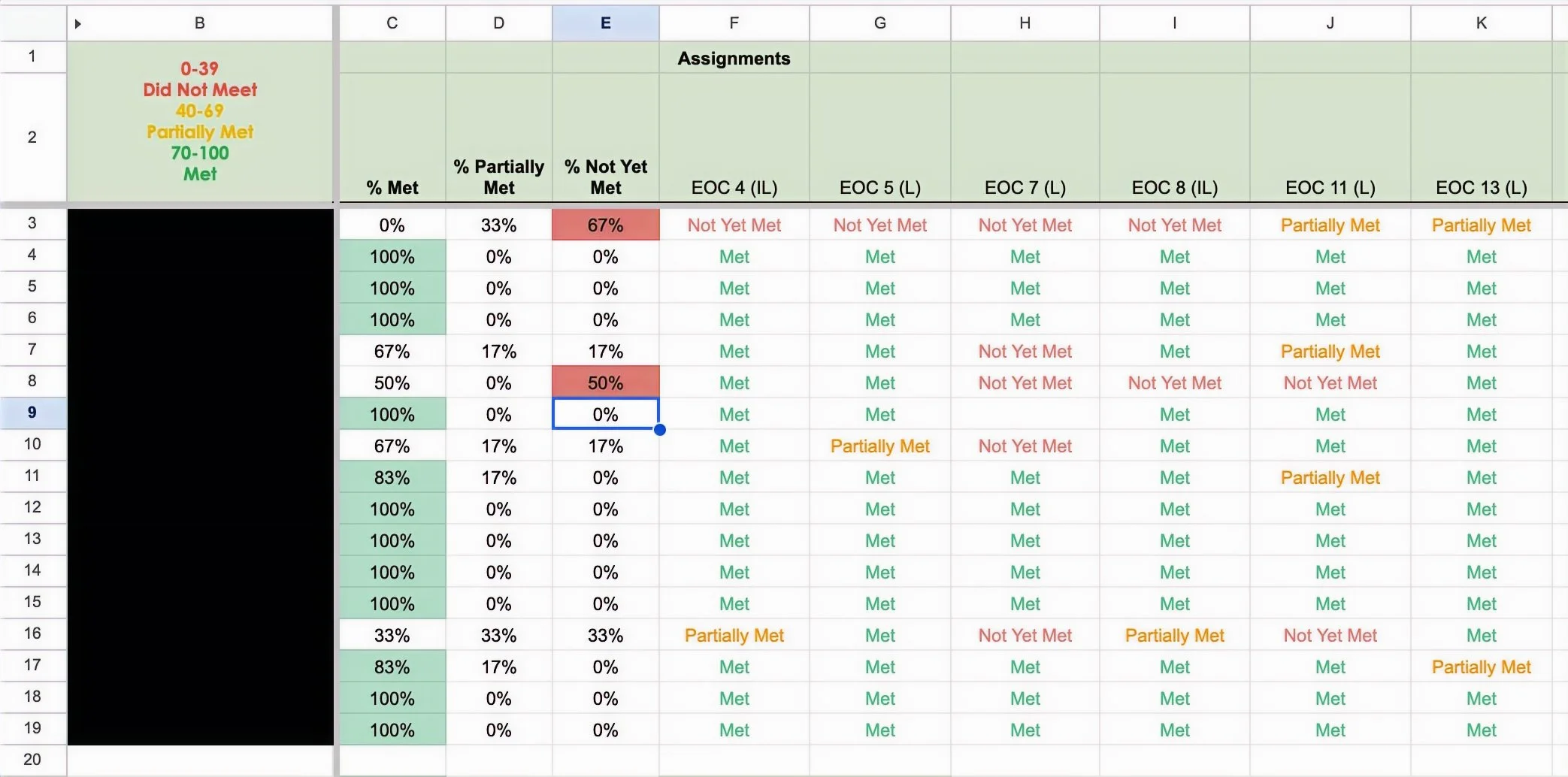

A sample of a data aggregation spreadsheet for a single class' overall proficiency.

Content sourcing and pacing also required adjustment. Texts were limited to public-domain or approved platforms, and assessment timelines were extended from one week to two weeks to accommodate students’ full course loads.

Evaluation & Impact

Evaluation was structured using Kirkpatrick Levels 2–4 to measure both learning and real-world performance outcomes.

Level 2 – Learning

Biweekly analytical assessments tracked proficiency growth over time. During the initial implementation phase (2023–2024), approximately 65% of learners met the 70% proficiency benchmark.

Following iterative assessment refinements and the introduction of written justification requirements, proficiency increased to 78% of learners meeting benchmark levels during the 2025–2026 school year to date.

Level 3 – Behavior

Written justification significantly changed learner behavior. Students were required to defend answer choices with textual evidence and explain why distractors were incorrect. This reduced superficial guessing, increased cognitive engagement, and strengthened independent analytical reasoning.

Students who articulated their reasoning were also more successful during self-correction cycles, demonstrating improved ability to identify and repair mistakes.

Level 4 – Results

Impact was validated through performance on the state End-of-Course (EOC) assessment.

2023–2024: +16.1 points above state-projected benchmarks; ~91% of students exceeded modeled expectations

2024–2025: +16.5 points above projections; ~93% exceeded expectations

Across both cohorts, student performance exceeded external growth projections by ~16 points on average, demonstrating sustained alignment between classroom assessment design and high-stakes testing outcomes.